I have a love/hate relationship with the

Catalogue of Life (CoL). On the one hand, it's an impressive achievement to have persuaded taxonomists to share names, and to bring those names together in one place. I suspect that

Frank Bisby would feel that the social infrastructure he created is his lasting legacy. The social infrastructure is arguably more impressive than the informatics infrastructure, in particular, the Catalogue has consistently failed to support globally unique identifiers for its taxa.

If you visit the CoL web pages you will see Life Science Identifiers (LSIDs) for taxa, such as

urn:lsid:catalogueoflife.org:taxon:d242422d-2dc5-11e0-98c6-2ce70255a436:col20130401 for the African elephant

Loxodonta africana. The rationale for using LSIDs in CoL is explained in the following paper:

Jones, A. C., White, R. J., & Orme, E. R. (2011). Identifying and relating biological concepts in the Catalogue of Life. Journal of Biomedical Semantics, 2(1), 7. doi:10.1186/2041-1480-2-7

This paper describes the implementation in great detail, but this is all for nought as CoL LSIDs don't resolve. In fact, as far as I'm aware, CoL LSIDs

have not resolved since 2009. Here is a major biodiversity informatics project that seems incapable of running a LSID service. These LSIDs are appearing in other projects (e.g., Darwin Core Archives harvested by GBIF), but they are non-functioning. Anyone using these LSIDs on the assumption that they are resolvable (or, indeed, that CoL cared enough about them to ensure they were resolvable) is sadly mistaken.

Jones et al. list some projects that use CoL LSIDs, including the

Atlas of Living Australia (ALA). While I have seen CoL LSIDs used by ALA in the past, it now seems that they've abandoned them. Resolving a LSID such as

urn:lsid:biodiversity.org.au:afd.name:433239 (

Dromaius novaehollandiae) (using, say the

TDWG resolver) we see the following LSID:

urn:lsid:biodiversity.org.au:col.name:6847559. This corresponds to the record for

Dromaius novaehollandiae for the 2011 edition of the Catalogue of Life. ALA have constructed their own LSID using an internal identifier from CoL. This is the very situation working CoL LSIDs should have made unnecessary. As Jones et al. note:

Prior to the introduction of LSIDs, the CoL was criticized for using identifiers which changed from year to year [29]. The internal identifiers have never been intended to be used in other systems linking to the CoL, of course, but this criticism draws attention to the demand for persistent identifiers that are designed for use by other systems. The CoL still does not guarantee to maintain the same internal identifiers, because there appears to be no need to insist on this as a requirement, but it does now provide persistent globally unique, publicly available identifiers.

That would be fine if, in fact, the identifiers were persistent. But they aren't. Because CoL have been either unable or unwilling to support their own LSIDs, ALA has had to program around that by minting their own LSIDs for CoL content! Note that these ALA LSIDs are tied to a specific version of CoL. Record 6847559 exists in the 2011 edition (

http://www.catalogueoflife.org/annual-checklist/2011/details/species/id/6847559) but not the latest (2013), where

Dromaius novaehollandiae is now

http://www.catalogueoflife.org/annual-checklist/2013/details/species/id/11908940.

Versioning LSIDs

One of features of LSIDs that has caused the most heartache is versioning. Just because this feature is there doesn't mean it is necessary to use it, and yet some LSID providers insist on versioning every LSID. CoL is such an example, so with every release the LSID for every taxon changes. In my opinion, versioning is one of the most discussed and most over-rated features of any identifier. Most people, I suspect, don't want

a version, they want

the latest version. They want to be able to have links that will always get them to the current version. This is how Wikipedia works, this is how DOIs work (see

CrossMark). In both cases you can see the existence of other versions, and go to them if needed. But by putting versions front and centre, and by not enabling the user to simply link to the latest version, CoL have made things more complicated than they need to be.

Changing LSIDs

It needs to be understood that in relation to concepts the Catalogue is intentionally not stable, so if a client is wishing to link to a name, not a concept, the client should use any LSID available for the name (or just the name itself), not a CoL-supplied taxon LSID. It should also be noted that it is intended that deprecated concepts will be accessible via their LSIDs in perpetuity, and the meta- data retrieved will include information about the concepts’ relationships to relevant current concepts (such as inclusion, etc.). - Jones et al. p. 14

Leaving aside the fact that CoL clearly has a different notion of "perpetuity" to the rest of us, the notion that identifiers change when content changes is potentially problematic. If a taxonomic concept changes CoL will mint a new LSID. While I understand the logic, imagine if other databases did this. Imagine if the NCBI decided that because the African elephant was two species instead of one (see

doi:10.1126/science.1059936), they should change the NCBI tax_id of

Loxodonta africana (tax_id 9785, first used in 1993) because our notion of what "Loxodonta africana" meant has now changed. Imagine the chaos this could cause downstream to all the databases that build upon the NCBI taxonomy, which would now link to an identifier the NCBI had dropped. Instead, NCBI simply added a new identifier for

Loxodonta cyclotis. Yes, this means the notion of "Loxodonta africana" may now be ambiguous (if it was sequenced before 2001, did the authors sequence

Loxodonta africana or

Loxodonta cyclotis?), but given the choice I suspect most could live with that ambiguity (as opposed to rebuilding databases).

But, even if we accept CoL's approach of changing LSIDs if the concept changes, surely concepts that don't change should always have the same LSID (except for changes in the version at the end)? Turns out, this is not always the case. For example, here are the CoL LSIDs for

Loxodonta africana from 2008 to 2013:

urn:lsid:catalogueoflife.org:taxon:de5724e4-29c1-102b-9a4a-00304854f820:ac2008

urn:lsid:catalogueoflife.org:taxon:de5724e4-29c1-102b-9a4a-00304854f820:ac2009

urn:lsid:catalogueoflife.org:taxon:24f8e252-60a7-102d-be47-00304854f810:ac2010

urn:lsid:catalogueoflife.org:taxon:d242422d-2dc5-11e0-98c6-2ce70255a436:col20110201

urn:lsid:catalogueoflife.org:taxon:d242422d-2dc5-11e0-98c6-2ce70255a436:col20120124

urn:lsid:catalogueoflife.org:taxon:d242422d-2dc5-11e0-98c6-2ce70255a436:col20130401 ) has changed twice. But in each release of these versions of CoL there have

. How is the 2008 concept of

As we start to tackle issues such as data quality and annotation, having persistent, resolvable, globally unique identifiers will matter more than ever. Shared identifiers are the glue that helps us bind diverse data together. The tragedy of LSIDs is that they could have been this glue if our community had chosen to invest even a fraction of the effort CrossRef invested in DOIs. Unfortunately we are now left with web sites and databases littered with LSIDs that simply don't work (CoL is not the only offender in this regard).

Resolvable identifiers mean we can actually get information about the things identified, as well as serving as a litmus test of the credibility of a resource (if I give you a URL and the URL doesn't work, you may doubt the value of the information on the end of that link). In a networked world, the trustworthiness of a resource is closely bound to its ability to maintain identifiers. The Catalogue of Life fails this test.

There is a fairly scathing editorial in Nature [The new zoo. (2013). Nature, 503(7476), 311–312.

There is a fairly scathing editorial in Nature [The new zoo. (2013). Nature, 503(7476), 311–312.

I have a love/hate relationship with the

I have a love/hate relationship with the

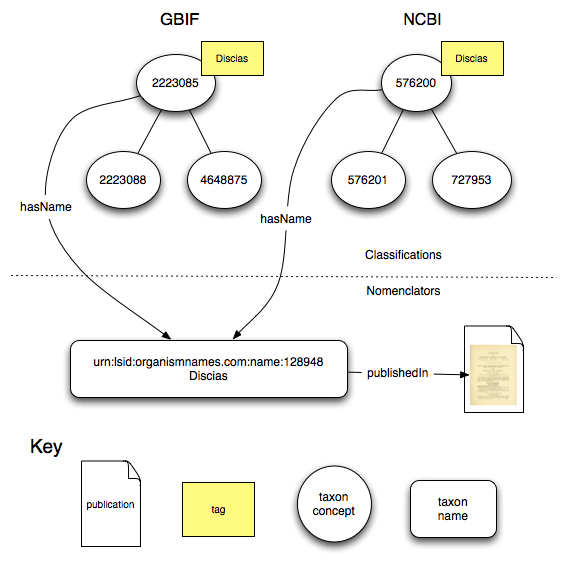

This is a quick sketch of a way to combine existing tools to help clean and annotate data in GBIF, particularly (but not exclusively) occurrence data.

This is a quick sketch of a way to combine existing tools to help clean and annotate data in GBIF, particularly (but not exclusively) occurrence data.